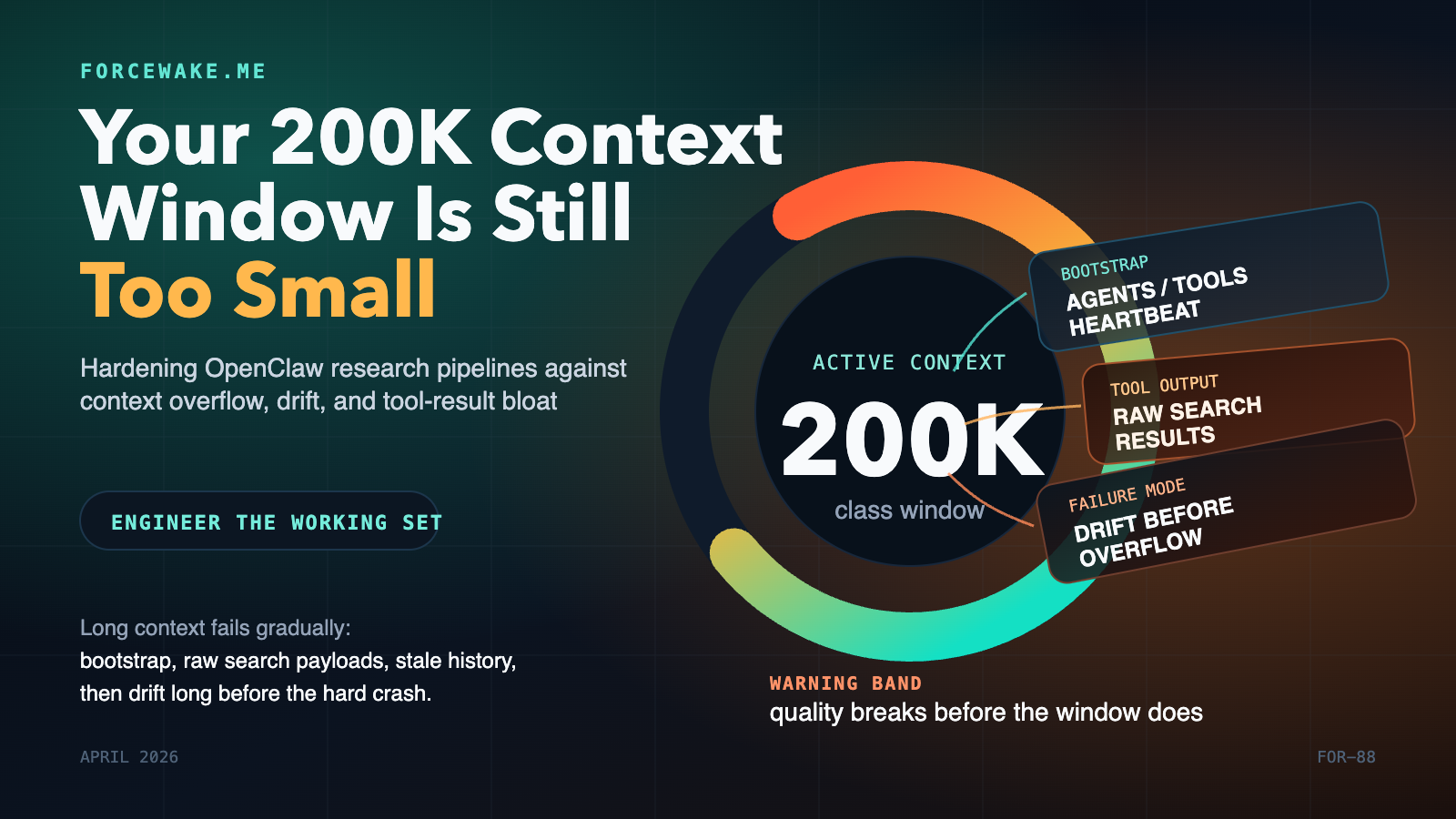

Your 200K Context Window Is Still Too Small: Hardening OpenClaw Research Pipelines Against Context Overflow

Most research-agent failures are not model failures. They are context-budget failures. You give the agent a large context window, then quietly burn it on bootstrap files, tool schemas, verbose search results, stale heartbeat history, and repeated prompt reinjection. The run still looks healthy for a while, but quality has already started to slide. The agent drifts, repeats searches, forgets what it just learned, or falls into overflow recovery loops. As of April 15, 2026, OpenClaw already exposes the right primitives to prevent that: detailed context introspection, isolated cron sessions, lightweight bootstrap context, session pruning, compaction, and heartbeat isolation. The engineering problem is not missing features. It is composing those features into a system that keeps the model’s active working set small, fresh, and task-specific. S1 S2 S3 S4 S5 ...