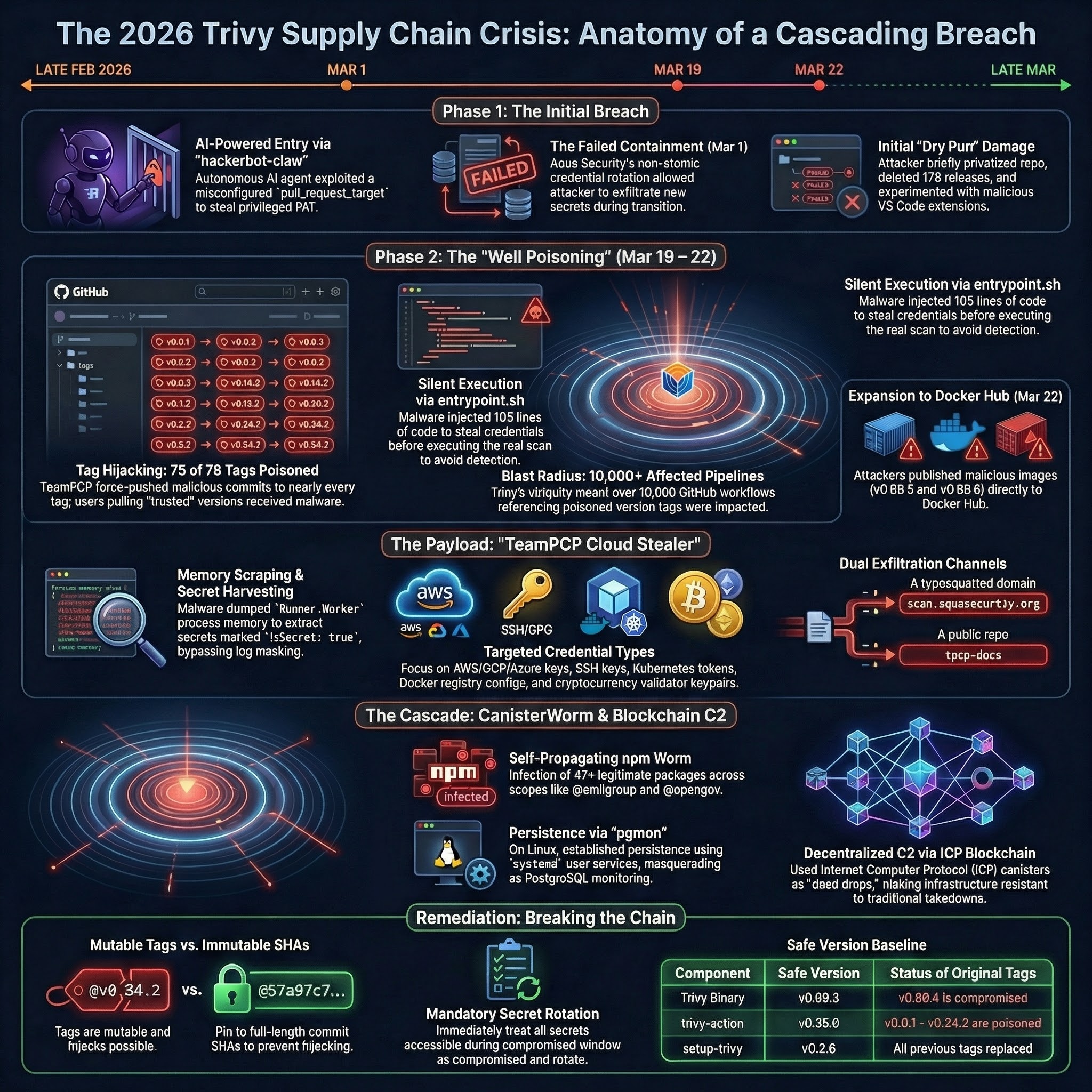

In March 2026, attackers turned Aqua Security’s Trivy ecosystem into a credential-harvesting distribution channel. This was not one bug, one poisoned package, or one bad release. It was a chained failure across GitHub Actions trust, secret rotation, mutable tags, runner memory, registry publishing, and npm’s default willingness to execute third-party code.

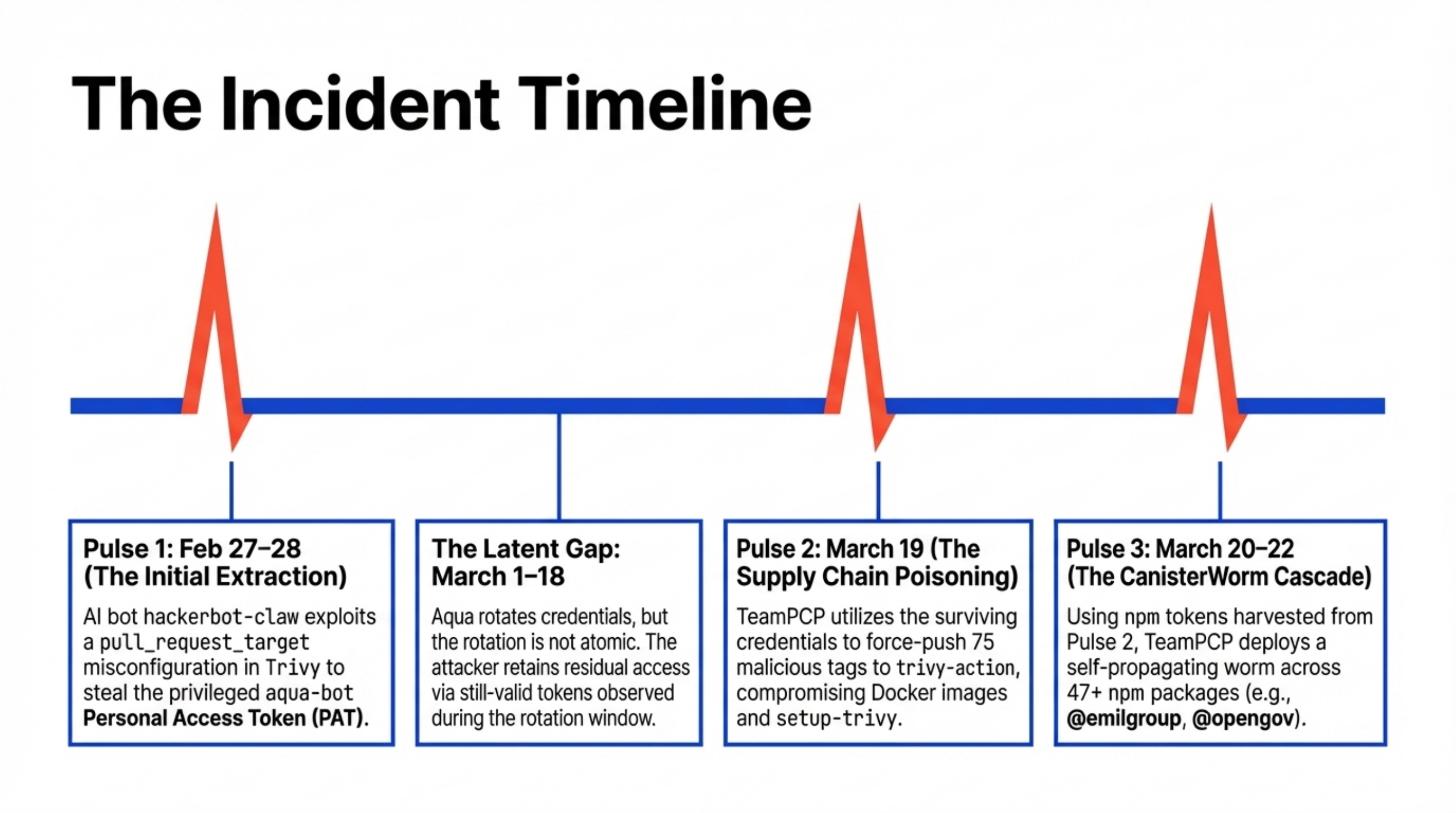

On February 27 and February 28, 2026, the Trivy story started the way a lot of modern software compromises start: not with a zero-day in the scanner, but with automation glued together too loosely around trust. An autonomous agent dubbed hackerbot-claw found a dangerous pull_request_target pattern in Aqua Security’s Trivy repository, exploited it, and stole a privileged aqua-bot token. That first breach was bad enough on its own. The real disaster came after the first incident was supposedly contained.

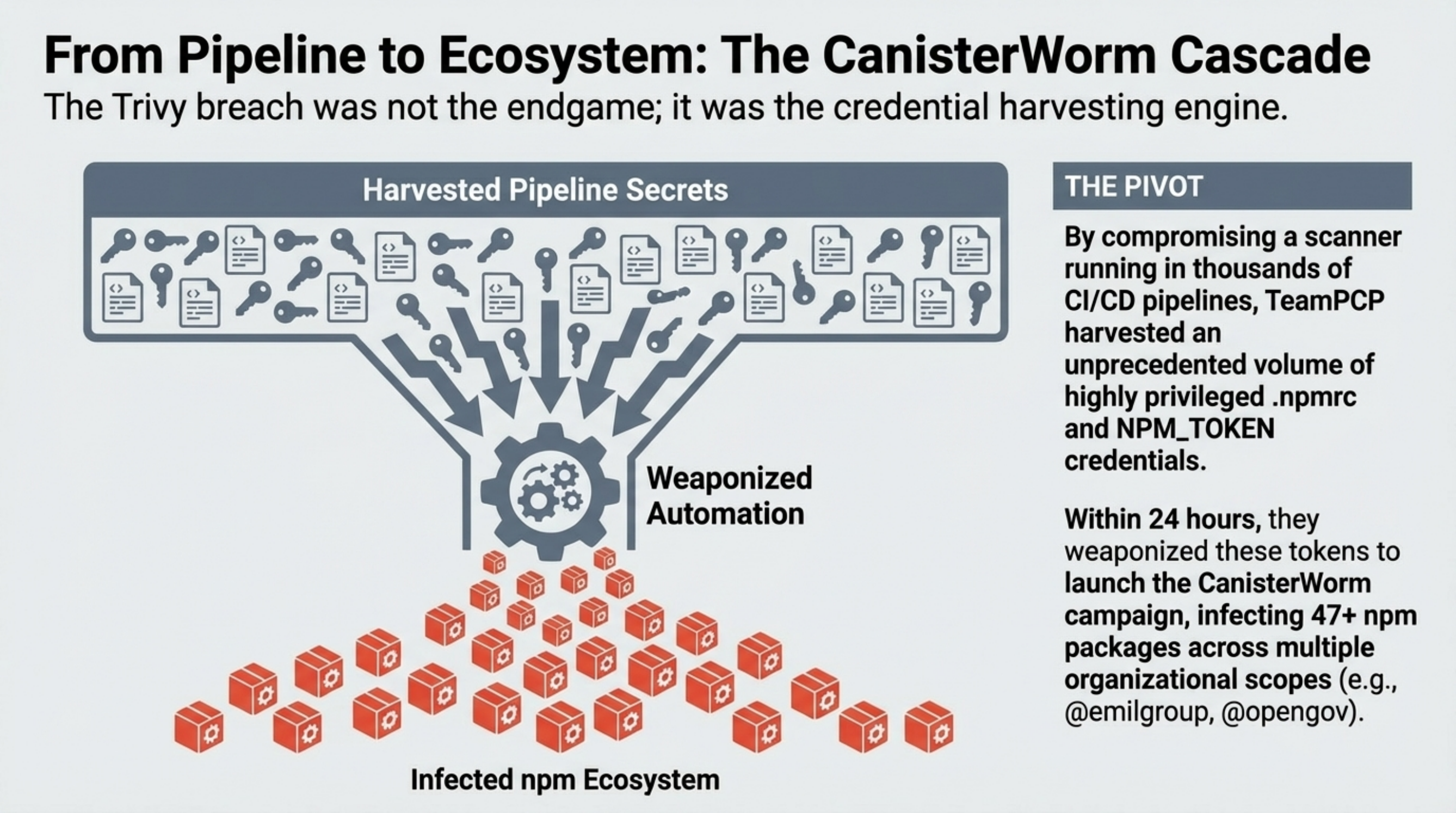

The second wave, launched on March 19, 2026, was what turned this from an embarrassing repository compromise into a supply chain case study that every CI/CD team should read line by line. TeamPCP used residual access left alive by non-atomic secret rotation, force-pushed malicious commits across nearly every published trivy-action tag, slipped trojanized Trivy binaries into trusted distribution paths, and then used the stolen credentials from those downstream executions to launch a self-replicating npm worm called CanisterWorm.

That sequence matters. This was not “malware in Trivy.” It was a trust inversion. A tool that organizations installed to inspect software integrity became the delivery mechanism for credential theft, lateral movement, and further supply chain compromise.

Note on the numbers: the research corpus is consistent on the mechanics and dates, but not on every exact count. It alternates between

75 of 76and76 of 77poisonedtrivy-actiontags, while the npm spread is reported as47+packages in early reporting and64+packages in later tallies, with135malicious package versions or artifacts overall. Socket’s live campaign page later raised that artifact count to141by March 23, 2026. The stable fact is simpler: all but one meaningfultrivy-actiontag were poisoned, and the worm spread across dozens of legitimate npm packages and well over a hundred malicious versions.

At a Glance

- Initial breach: February 27-28, 2026, via a misused

pull_request_targetworkflow in Trivy’s repository. - Persistence failure: Aqua rotated credentials after the first breach, but not atomically, which left TeamPCP a live path back in.

- Main distribution hit: March 19, 2026, with poisoned

trivy-actiontags, compromisedsetup-trivy, and trojanizedv0.69.4. - Worm pivot: stolen npm publishing tokens were turned into CanisterWorm, which republished malicious patch versions across legitimate namespaces.

- Core lesson: the attack worked because multiple convenience defaults lined up at once: mutable tags, overprivileged CI, long-lived secrets, and install-time code execution.

Phase One: The AI Bot That Opened the Door

The first breach was not a Hollywood “AI hack.” It was more interesting and more uncomfortable than that. hackerbot-claw appears in the corpus as an autonomous agent that systematically scanned public repositories for exploitable GitHub Actions patterns, especially pull_request_target misuse. That trigger is powerful because it runs in the security context of the base repository. Used correctly, it can support safe automation around pull requests from forks. Used carelessly, it hands privileged execution to untrusted input.

That is what happened in Trivy’s apidiff.yaml workflow. The bot submitted a crafted pull request from a fork, got code to execute inside a privileged workflow context, and exfiltrated a Personal Access Token belonging to aqua-bot. From there, the blast radius immediately escaped the normal “bad CI run” category. The attacker could privatize repositories, rename them, delete releases, and tamper with adjacent distribution surfaces.

There are two reasons the first breach matters beyond the novelty of an autonomous attacker.

First, it shows that AI did not replace exploitation. It compressed reconnaissance, target selection, and exploit packaging into a continuous loop. The advantage was not magical reasoning. It was speed, persistence, and breadth. A human red team takes breaks. A bot can scan GitHub for a week straight.

Second, the first incident should have been survivable. A stolen token is serious, but still containable if containment is real. The reason this became a March crisis is that the response left a live bridge standing.

The Fatal Mistake: Secret Rotation That Wasn’t Atomic

The most important technical lesson in this entire incident is not “pin your actions,” though you should. It is this: secret rotation is not remediation if old credentials can still observe or exfiltrate new ones.

After the February breach, Aqua rotated credentials. But the corpus repeatedly describes that rotation as non-atomic. In practice, that means some secrets were replaced while others remained live long enough for the attacker to keep watching the environment. TeamPCP did not need to re-exploit the original workflow from scratch. They used still-valid access during the rotation window to steal the new credentials as they appeared.

This is the kind of failure that incident response playbooks often underweight because it feels operational rather than technical. It is neither. It is a control-plane flaw. If compromised credentials can coexist with fresh credentials during remediation, the attacker can parasitize the recovery itself.

That is the hinge point of the Trivy story. The first breach got them in. The rotation failure let them stay. Everything that followed was a monetization and distribution problem.

Phase Two: Turning Git Tags Into Malware Delivery

On March 19, 2026, TeamPCP moved from foothold to scale.

The campaign had a clear pulse structure: initial extraction in late February, a live rotation gap, mass tag poisoning on March 19, and the npm worm cascade immediately after.

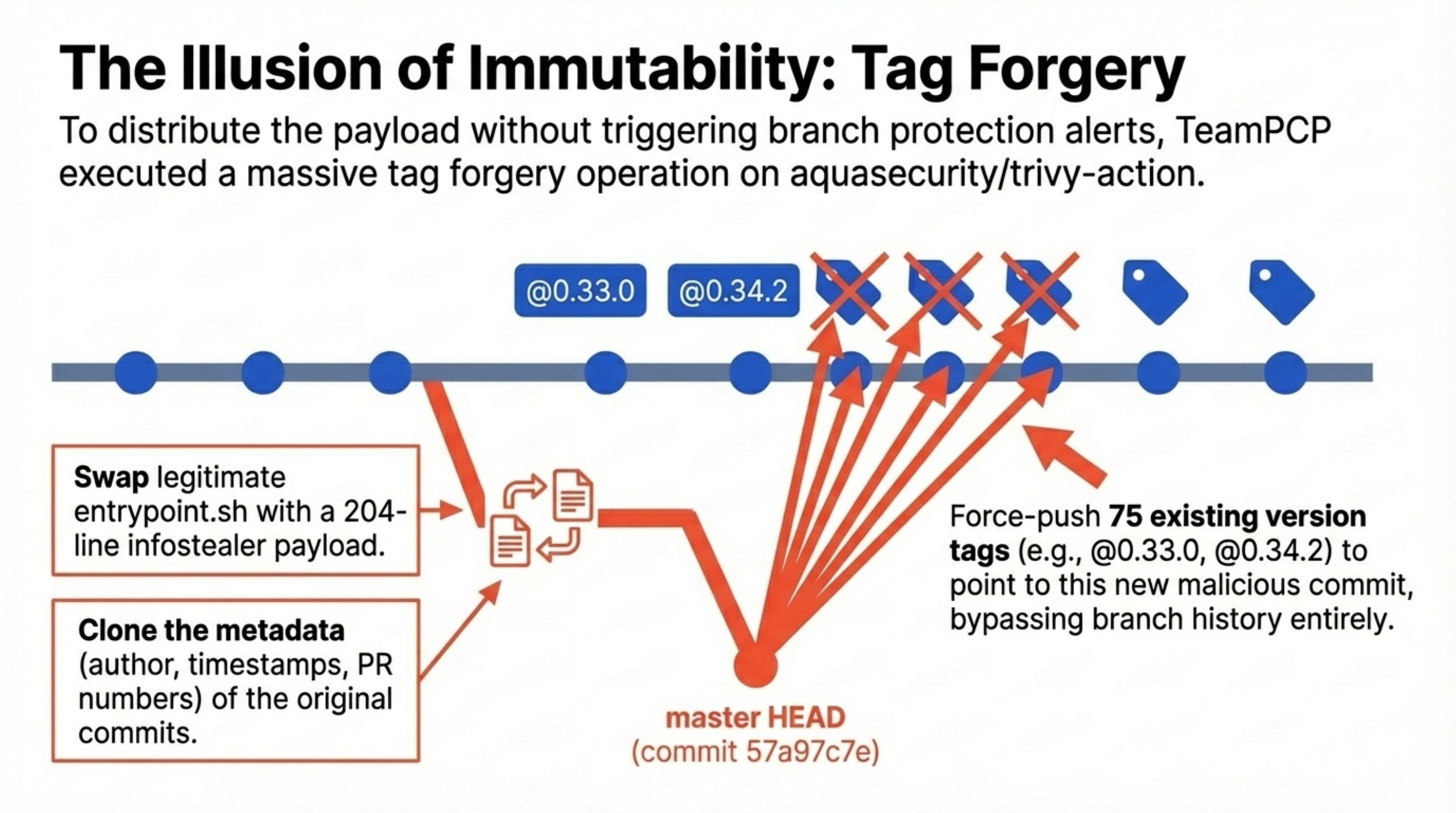

The most elegant part of the second-stage attack was also the most banal: they abused mutable references exactly the way Git allows them to be abused. In GitHub Actions, many organizations still reference actions by tags such as @v1, @0.34.2, or @latest. Those are not immutable artifacts. They are movable pointers. If someone with sufficient repository access force-pushes the tag to a different commit, every downstream workflow that trusts that tag silently executes new code.

That is what happened to aquasecurity/trivy-action. The research bundle is consistent that all but one meaningful version tag were poisoned and that 0.35.0 remained clean. It is also consistent that setup-trivy tags were hijacked and that malicious Trivy binaries were published starting with v0.69.4, while v0.69.3 is treated as the last known clean binary before the March compromise. Malicious Docker images followed as v0.69.5 and v0.69.6 on March 22, 2026.

The attacker did not need to compromise downstream repositories. Repointing trusted tags to a malicious commit was enough to silently swap code in every workflow that consumed those tags.

The slide deck in the corpus adds a detail most summaries leave out: the compromise windows were short, sharp, and enough. The poisoned trivy-action tags were live from March 19, 2026 17:43 UTC until March 20, 2026 05:40 UTC. setup-trivy exposure ran from March 19, 2026 17:43 UTC to 21:44 UTC. The trojanized v0.69.4 binary window ran from March 19, 2026 18:22 UTC to 21:42 UTC. The Docker image window was worse: 0.69.4, 0.69.5, and 0.69.6 remained exposed from March 19, 2026 18:24 UTC until March 23, 2026 01:36 UTC. That is one of the ugly truths of CI/CD compromise. The attacker does not need a long dwell time when the distribution channel is already trusted.

| Component | Compromised versions | Last known clean version | Exposure window (UTC) |

|---|---|---|---|

| Trivy binary | v0.69.4 | v0.69.3 | March 19, 2026 18:22 to 21:42 |

| Trivy Docker images | 0.69.4, 0.69.5, 0.69.6 | 0.69.3 | March 19, 2026 18:24 to March 23, 2026 01:36 |

trivy-action | all meaningful tags up to 0.34.2 | 0.35.0 | March 19, 2026 17:43 to March 20, 2026 05:40 |

setup-trivy | all tags through v0.2.5 | v0.2.6 | March 19, 2026 17:43 to 21:44 |

This is why the attack propagated so efficiently. Nobody had to merge a pull request in downstream repos. Nobody had to accept a dependency update. Nobody had to change a workflow file. Pipelines pulled the malware because they were designed to trust publisher-controlled pointers.

The forensics are telling. The malicious tag targets were described as “imposter commits” that cloned the metadata of legitimate history: author names, timestamps, even the texture of prior release commits. But the attack still leaked through Git’s object graph. The corpus highlights a temporal impossibility in the poisoned commits: commits that claimed dates from 2021 or 2022 had parents from 2026. On GitHub, some poisoned releases also exposed the classic symptom of a hijacked historical tag: “0 commits to master” for releases that should have been anchored deep in older history.

That combination is important for defenders. The branch protection story was mostly irrelevant. These commits did not need to live on a protected branch. They only needed to become the new target behind a trusted tag.

What the Payload Actually Did

The payload itself was not subtle. It did not need to be.

The research corpus describes a malicious entrypoint.sh in the action path and a trojanized binary path in the release path. Both variants followed the same logic:

- Run the credential theft first.

- Exfiltrate what matters.

- Execute the legitimate Trivy scan as cover so the job still “works.”

That last step matters. Most compromised CI/CD tooling is caught either because it breaks builds or because it generates obviously alien output. TeamPCP’s payload treated the scan as camouflage. The job could still succeed while the runner was being looted.

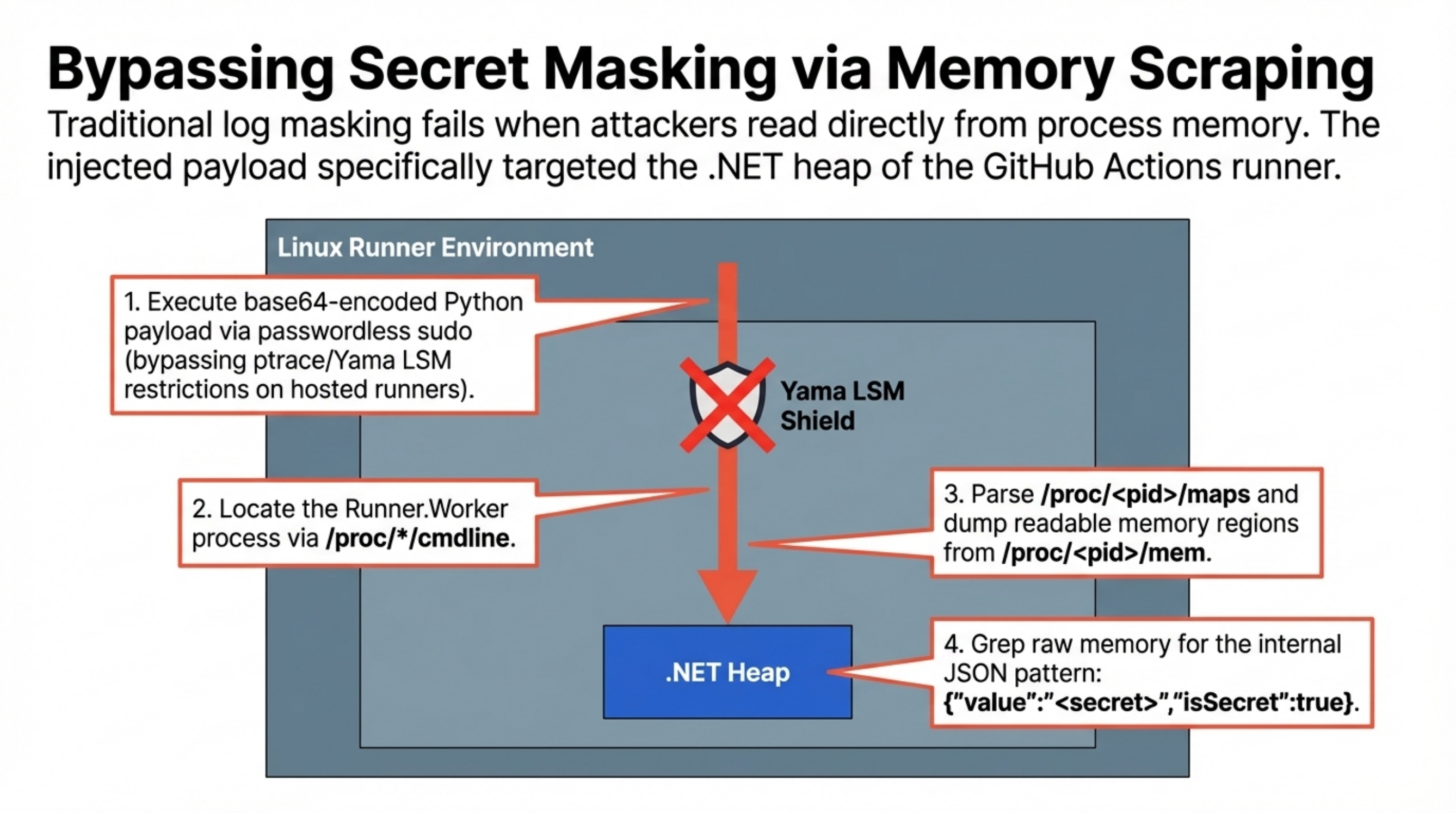

The most important technical detail in the looting phase was memory scraping.

GitHub masks secrets in logs. That protection is useful, but it is often misunderstood as secret protection rather than output redaction. On Linux runners, the payload used sudo to inspect the Runner.Worker process, parsed /proc/<pid>/maps to find readable regions, dumped /proc/<pid>/mem, and searched the resulting memory for GitHub’s internal secret representation, including the JSON marker \"isSecret\":true. In other words, the malware did not try to trick GitHub into printing secrets. It read them directly out of the runner’s heap before masking even mattered.

This is the part many teams miss: GitHub masked logs, but the malware went under the logging layer and read secrets straight from the runner’s memory.

That is the move that should permanently change how people think about CI/CD secret handling. If your workflow loads a secret into process memory, a malicious action running with enough privilege does not care whether your logs are clean.

The payload then widened the search. Across the reports, the targeted material includes:

- AWS, GCP, and Azure credentials

- Kubernetes service account tokens

- Docker registry credentials

- Terraform state and

.envfiles - SSH and GPG keys

- npm publishing tokens from

.npmrc - shell histories

- cryptocurrency wallet and validator key material

The exfiltration design was more mature than a smash-and-grab script. The bundle was encrypted with AES-256-CBC, with session material wrapped using an RSA-4096 public key, then sent to the typosquatted domain scan.aquasecurtiy.org, anchored in the corpus to IP 45.148.10.212.

And if the primary exfiltration path failed, the malware had a fallback that is both crude and effective: use a stolen PAT to create a public GitHub repository named tpcp-docs on the victim’s account and upload the stolen data as a release asset. That is not just exfiltration. It is an IOC that doubles as public proof that the victim’s identity boundary has already collapsed.

The Docker branch of the incident added another operational lesson. By March 22, 2026, the official latest Docker tag pointed at compromised 0.69.6, and public reporting noted that even after image removal, caches such as mirror.gcr.io continued serving the poisoned layers. Deleting a bad image is not the same thing as erasing its distribution path.

The Real Innovation Was Not the Stealer. It Was the Pivot.

A lot of supply chain incidents stop after credential theft. This one used stolen credentials to manufacture new victims.

CanisterWorm was the multiplier. Once TeamPCP had npm tokens from compromised runners and developer environments, they did not merely cash out access. They operationalized it into automated propagation.

The Trivy compromise was the feeder system. The real scale came after stolen npm tokens were converted into automated republishing across legitimate package namespaces.

The mechanism was brutally direct:

- Search

.npmrcfiles and environment variables for publishing tokens. - Query npm to enumerate packages maintained by the compromised identity.

- Increment patch versions.

- Republish those legitimate packages with malicious

postinstalllogic and deployment helpers such asdeploy.js.

This is how a compromise of one CI workflow mutates into an ecosystem event. The victim stops being the final target and becomes an infection source.

The corpus places the early wave in scopes such as @emilgroup, @opengov, and @teale.io, with later counts rising to dozens of packages and 135 malicious artifacts or versions. Live campaign tracking pushed that higher to 141 affected artifacts by March 23, 2026. That difference between “packages” and “artifacts” is worth keeping straight. A single compromised maintainer identity can fan out into many package versions very quickly, especially when the worm only needs to bump patch numbers to look routine.

This is also where npm’s default execution model made the campaign stronger. postinstall hooks are legitimate and common enough that malware can hide inside normal package ergonomics. If the ecosystem will eagerly run third-party install scripts, a stolen publishing token is not just a publishing credential. It is remote code execution sold as package maintenance.

Why the ICP Canister Matters

CanisterWorm’s command-and-control story is one of the more strategically interesting parts of the campaign.

Instead of relying only on ordinary domains, the worm used an Internet Computer Protocol canister as a decentralized dead drop. The research bundle repeatedly references canister tdtqy-oyaaa-aaaae-af2dq-cai.raw.icp0.io. That matters because it decouples the on-host implant from a fixed domain or payload location. The operator can rotate second-stage instructions without changing the worm already running on disk.

This is not cyberpunk theater. It is operational resilience. A dead-drop model means defenders cannot assume that taking down one web endpoint neutralizes the implant. It also introduces an awkward policy problem for enterprises: blocking one bad domain is easy; deciding what to do about traffic to shared decentralized infrastructure is not.

The corpus also notes a dormant or “disarmed” state in which the canister returned a YouTube link. That is a clever control channel. It lets the operator quiet the worm without burning the infrastructure, which buys time, lowers noise, and complicates incident triage.

Persistence, Because Not Every Victim Was Ephemeral

On GitHub-hosted runners, many infections were transient because the runner lifecycle itself is ephemeral. On self-hosted Linux systems and developer machines, the attackers aimed for something stickier.

The recurring persistence marker in the research package is ~/.config/systemd/user/pgmon.service, backed by Python payloads described as service.py or sysmon.py, with secondary payload staging under /tmp/pglog. The naming is not sophisticated. It is sufficient. “pgmon” sounds like a PostgreSQL monitoring helper, which is exactly the sort of low-curiosity label that survives in crowded Linux estates.

This is a useful reminder that supply chain attacks do not stay supply-chain-shaped once they land. They collapse into host intrusion, persistence, and credential theft on whatever machine executes the payload.

Why This Incident Feels Different From Older Supply Chain Attacks

Every major software supply chain event gets compared to SolarWinds. That comparison is usually lazy. This one deserves a more precise frame.

SolarWinds was a build system compromise associated with strategic espionage. Codecov centered on a poisoned uploader script and large-scale credential theft. tj-actions/changed-files showed just how much damage mutable action tags can do when a popular GitHub Action is retargeted. ua-parser-js demonstrated how malicious npm releases plus install-time execution can reach developers fast. XZ showed what patient social infiltration can look like when the attacker plays a long game inside a critical dependency.

Trivy in March 2026 is different because it stacked several of those patterns into one chain:

- AI-assisted reconnaissance and initial CI exploitation

- failed remediation that preserved attacker visibility

- mutable Git tag abuse for silent downstream execution

- memory scraping to bypass secret masking

- publisher-token theft

- self-propagating npm republishing

- resilient C2 via decentralized infrastructure

That combination is what makes the incident memorable. The security scanner was not merely compromised. It became a credential pump feeding a worm.

It also helps explain why the blast radius is hard to count cleanly. The source pack cites more than 10,000 pipelines at risk, and its comparison table says 767 repositories were analyzed with 45 confirmed compromised public runs. Those are not contradictory numbers. They describe different surfaces: theoretical downstream exposure versus publicly observed execution. The private side of the incident is, by definition, the part nobody outside the victims can count.

Detection Was Possible, but Only for Teams Looking in the Right Place

The collected material attributes some of the earliest useful detections to behavioral monitoring rather than to conventional signature checks. That fits the mechanics.

If you were looking for vulnerable package metadata, you would miss the most important action-stage behavior. If you were looking for broken jobs, you could miss jobs that still completed successfully. If you were looking only at logs, memory scraping could stay invisible.

The detections that mattered were things like:

- outbound connections to

scan.aquasecurtiy.org - egress to

45.148.10.212 - traffic to the ICP dead-drop

- reads against

/proc/<pid>/mem - suspicious access to

/proc/*/environ - user-level

systemdservice creation such aspgmon.service - sudden repository creation of

tpcp-docs - unauthorized patch bumps in npm namespaces

- historical action tags whose target commits no longer fit the repository timeline

Another signal mattered socially, not just technically: attackers reportedly flooded GitHub discussions on March 20, 2026 to bury technical warnings and slow community coordination. In this campaign, incident-response sabotage was part of the tradecraft.

That detection model is worth underlining because it is how defenders need to think about CI now. In the Trivy incident, the decisive signal was not “a vulnerable scanner version exists.” It was “a trusted scanner is behaving like malware.”

The Defensive Lessons Are Brutal and Specific

This incident does not need vague takeaways. It gives us concrete rules.

First, stop pretending version tags are integrity controls. In GitHub Actions, pin third-party actions to full commit SHAs. For container images, pin immutable digests. Publisher-controlled names are convenience features, not security boundaries.

Second, redesign incident response around atomic revocation. Revoke first. Reissue second. If availability takes a hit, take the hit. A “graceful” rotation that leaves a compromised identity alive long enough to observe new credentials is not graceful. It is attacker-assisted continuity.

Third, remove long-lived cloud credentials from CI wherever possible. Use OIDC and short-lived federated credentials instead of static keys parked in runner environments and filesystems.

Fourth, treat CI runners as hostile territory once third-party code executes there. Monitor for /proc memory access, filesystem sweeps, and suspicious outbound traffic. GitHub log masking is not a defense against local process inspection.

Fifth, disable npm lifecycle scripts by default in build environments unless there is a hard reason not to. ignore-scripts is ugly, but so is letting a stolen token become a worm.

Sixth, stop using pull_request_target as a convenience hammer. If you need privileged automation around pull requests from forks, split the design. Use a low-privilege workflow to collect metadata and a separate workflow_run stage to perform privileged operations in a trusted context.

Finally, classify security tools as privileged third-party code, not as honorary infrastructure. Trivy was trusted because it was a security product. That trust raised its blast radius. A scanner with access to cloud credentials, registries, cluster tokens, and the Docker socket is not “safe by role.” It is high-value executable code that deserves the same containment you would apply to any other powerful dependency.

The Deeper Story: Convenience Lost to Integrity

The Trivy and CanisterWorm campaign is a story about convenience defaults colliding all at once.

pull_request_target made contributor automation easy.

Mutable tags made workflow syntax easy.

Static credentials made CI setup easy.

postinstall made package ergonomics easy.

Sequential rotation made incident response operationally easy.

And every one of those choices widened the attacker’s lane.

That is why this incident will stick. It was not powered by a single dazzling exploit. It was powered by the gap between how developers describe trust and how systems actually enforce it. TeamPCP did not need to break the idea of software supply chain security. They only had to route around the parts we still leave mutable, implicit, and over-privileged.

On March 19, 2026, the trusted scanner became the attack path. On March 20, 2026, the stolen credentials became a worm. That is the sequence every engineering leader should remember.

If your pipeline can silently replace a dependency, silently expose secrets in memory, silently publish new packages, and silently keep working while it does all three, you do not have a secure delivery chain. You have a very fast infection path.